|

Enterprises rely on the Databricks Lakehouse. Monitoring batch and streaming pipelines.

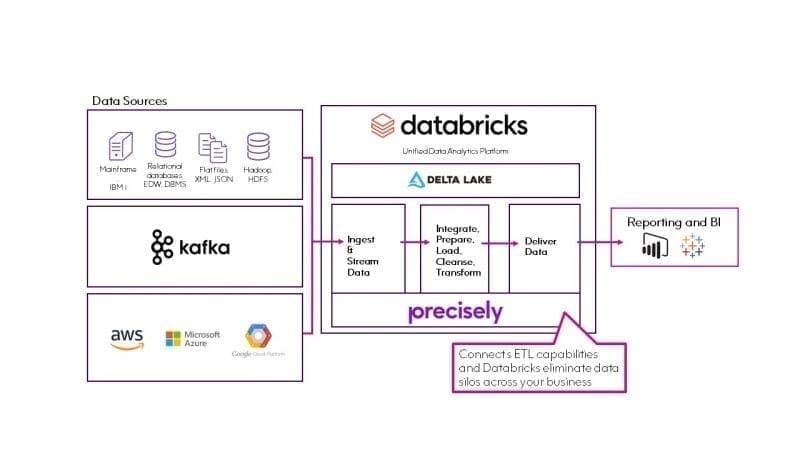

In this workshop, you’ll get an overview of how Databricks is enabling Live Nation teams that have data engineering needs to build a lakehouse architecture that delivers data warehouse performance with data lake economics, all powered by open source technologies. Databricks uses Delta Lake by default for all reads and writes and builds upon the ACID guarantees provided by the open source Delta Lake protocol. 14 March 2023 - 19 April 2023 Discover the power of the Databricks Lakehouse at our series of live Lakehouse Days across EMEA. Here are 7 capabilities that Telmai brings to the Databricks platform.

Many of the optimizations and products in the Databricks Lakehouse Platform build upon the guarantees provided by Apache Spark and Delta Lake. What’s all this talk about Databricks and the Databricks Lakehouse? Please join us for this hands-on workshop to learn more about your new data and AI platform.Ī data lakehouse combines the best of data warehouses and data lakes into a single, unified architecture that can serve all data use cases - including BI, streaming analytics, data science and machine learning. In this blog post, we will explore how to build a data labeling app using Python, Dash, and the Databricks Lakehouse platform. Databricks originally developed the Delta Lake protocol and continues to actively contribute to the open source project.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed